Cleaning up more than city streets

Building the data infrastructure that turned a siloed, multi-platform operation into a scalable, real-time product — and the decisions that made it possible.

Role

Product Manager/Designer

Timeline

April 2024 – September 2025

Skills

Systems design, data architecture, workflow automation

About Glitter

Glitter is a social impact cleaning service based in Philadelphia. They facilitate neighbor-funded cleanups for city blocks, hiring and paying a living wage to cleaners who often have barriers to work. The model is inherently data-intensive: blocks, subscribers, pledges, billing cycles, cleaning schedules, and grant programs all need to move in sync.

As Product Manager, I owned the end-to-end product build, including the data architecture that made the member hub possible in the first place.

1k+

Users and city blocks unified into a single source of truth consolidated from multiple disconnected platforms with zero relational logic

0→1

Data infrastructure designed, built, and maintained entirely in-house — no engineering resources, no external dependencies

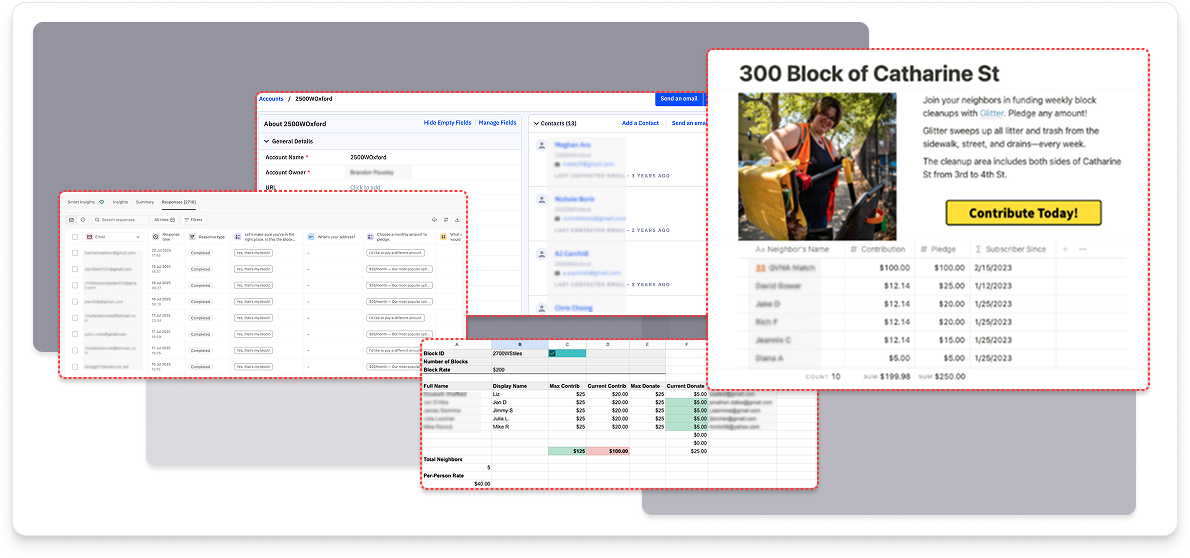

Before: fragmented workflows across multiple platforms

How might we build a data foundation strong enough to power a dynamic member portal web app product, and flexible enough to scale to 1,000+ city blocks without breaking?

When I came onto the project, Glitter's operational data was scattered across multiple platforms with no relational logic connecting them. Every new city block required a custom build. Every subscriber update was a manual task. There was no single place to see the state of the business.

Subscriber data, billing records, operational notes — siloed, not queryable

City block and subscriber documentation — disconnected from any live data

Intake forms, city block and subscriber documentaion — data exported manually, no automation

Email and subscriber communication and onboarding — maintained separately from block or billing data

No single source of truth — data fragmented across multiple platforms with no relational logic

City block pages were static and required a custom build per block — impossible to scale

Subscription and billing workflows depended entirely on manual imports and exports

No real-time visibility into block status, funding levels, or cleaner activity

Grant-funded initiatives couldn't be integrated into the same system as neighbor-funded blocks

The member hub would only be as good as the data underneath it. Before a single screen could be designed meaningfully, the data problem had to be solved. Making that case internally, leading with infrastructure over UI, was part of the work.

Infrastructure First. Product second.

The decision to lead with data architecture rather than UI was deliberate. A visually polished portal built on fragmented data would have been a liability, dynamic on the surface, broken underneath. The sequence mattered.

Data exported manually, no automation

Real-time features aren't real — they're manual

Grant and neighbor-funded blocks can't coexist

Every new city block is a new project

How the foundation was built

Airtable was selected after evaluating options against Glitter's constraints: in-house maintainability, no dedicated engineering resources, relational data support, and native integration with third-party integrations. The decision wasn't just about features, it was about what the team could actually own and evolve without outside help.

I designed and built a comprehensive Airtable base from scratch, structuring relational tables across every operational domain. The architecture started with the two core entities that drive Glitter's business model: city blocks and the subscribers who fund them. Every relationship was designed around that logic first, then tested against two practical questions: does this support real-time updates, and does it connect cleanly to the third-party platforms the product would depend on?

That last question mattered more than it might seem. The data model had to account for Glitter's existing neighbor-funded block program and anticipate a grant-funded program that had never existed in the data set. Designing for both from the start meant the architecture could scale to new program types without restructuring, rather than band-aiding them on later.

Simplified schema: key tables and linked record relationships.

Snapshot of resulting Airtable base and relational logic.

Raw structure alone wasn't enough. I built automated workflows to keep data current and reduce manual intervention across the team, and where the logic exceeded what native Airtable automations could handle, I led the process of identifying the gap, scoping the work, and bringing in an Airtable specialist to implement it.

The most complex example was a contribution redistribution script: when pledge amounts changed on a given block, funds needed to be recalculated and redistributed automatically across subscribers. I researched and vetted candidates, led the scoping conversation, and integrated the delivered script into the existing workflow, ensuring it connected cleanly with the surrounding automation logic.

.svg)

Automated funding redistribution logic when subscription amounts changed

.svg)

Cross-table automations and scripts propagating block and subscriber status changes across dependent records

.svg)

Validated data entry rules to reduce human error at point of input

.svg)

Billing and reporting dashboards for internal ops, surfacing real-time changes previously lacking in siloed data sets

How might we give neighbors real-time proof that their block is being taken care of automatically, without anyone on the team doing it manually?

The most visible proof that the data architecture worked, and the feature that most directly demonstrated Glitter's accountability to neighbors, was the real-time photo feed on each block's member portal web app page.

The challenge: cleaners are in the field, not at a desk. The workflow needed to be low-friction on their end, reliable in the middle, and fully automated on the display side.

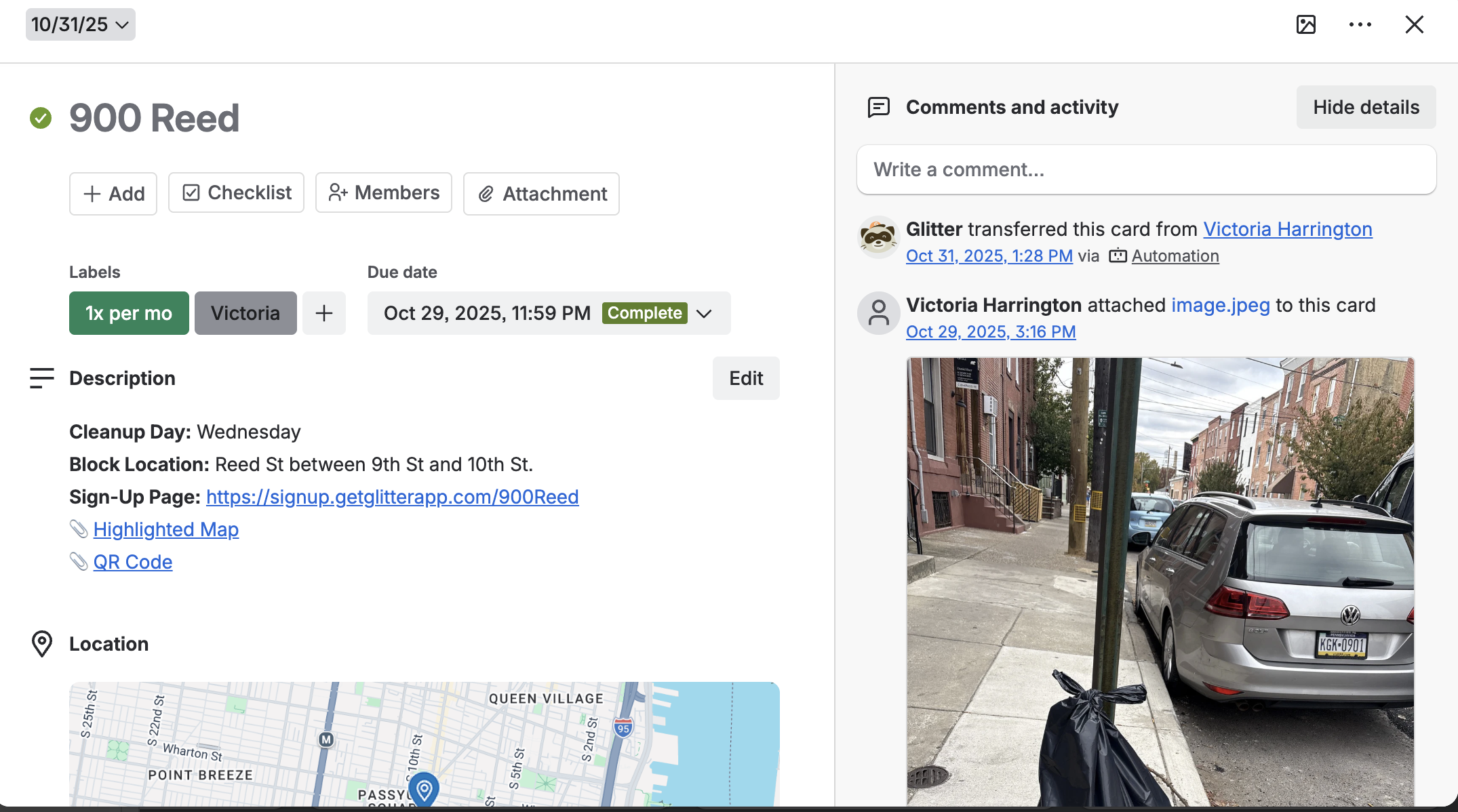

Cleaner submits photos to a block-specific Trello card upon cleanup completion

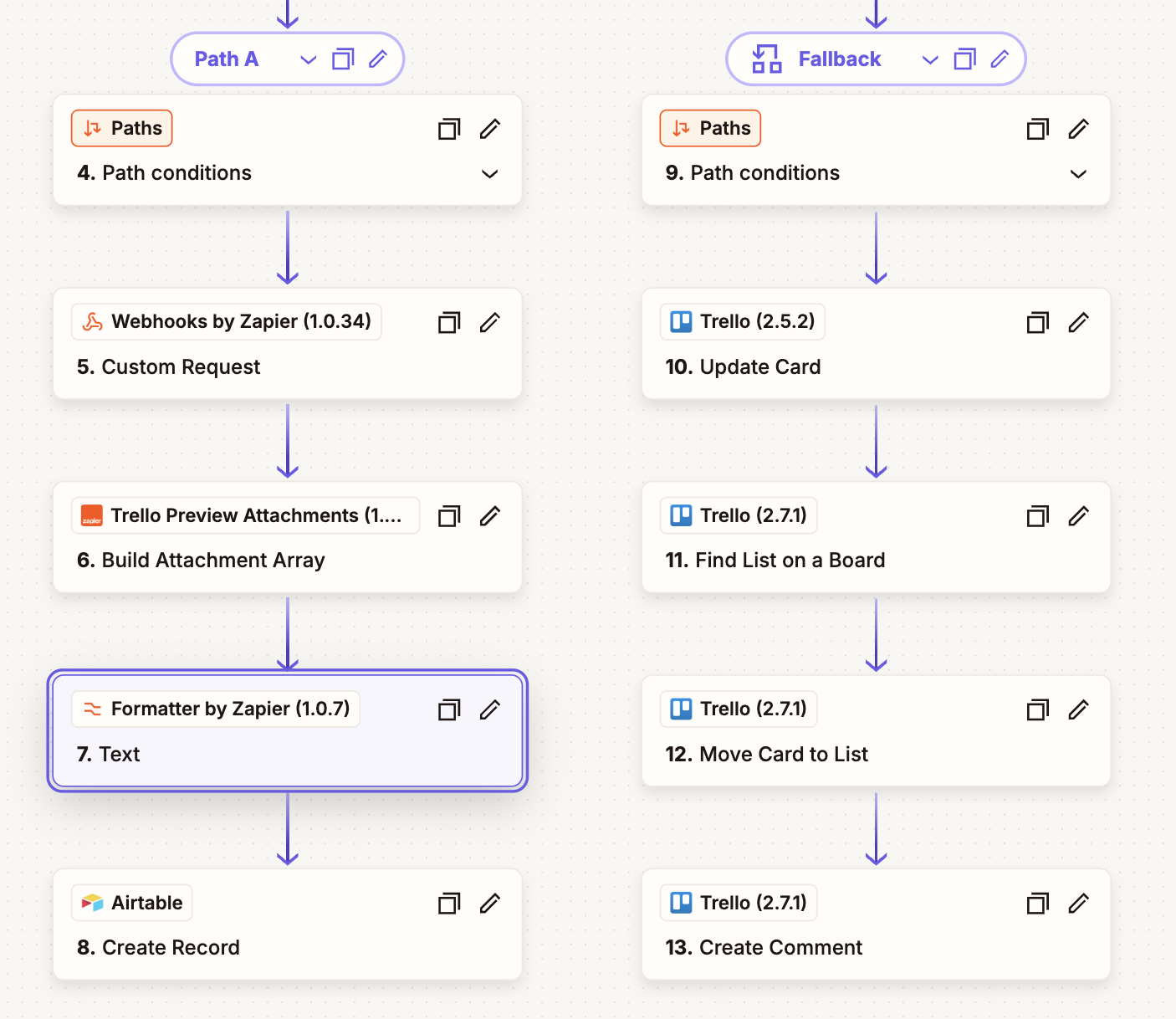

Zap triggers on card completion, pushes photos, cleaner, and block data to Airtable

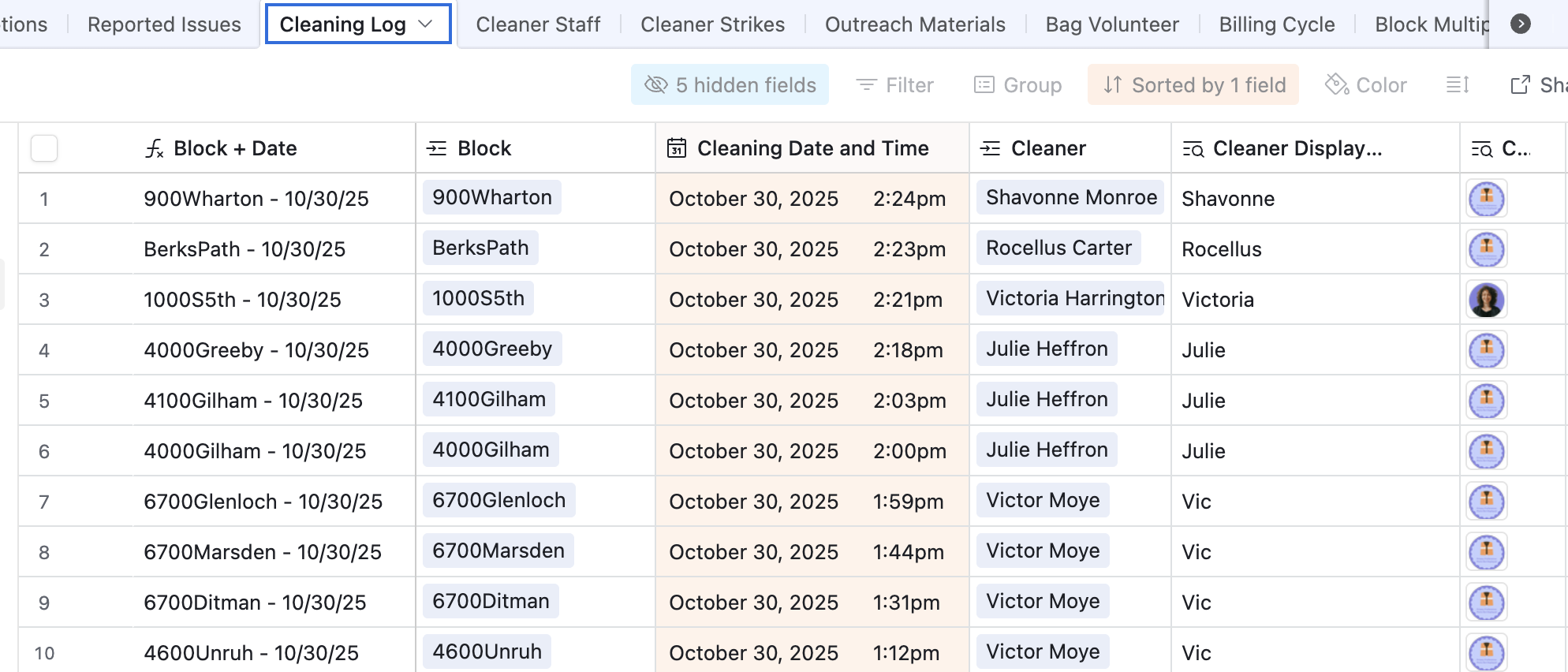

Photo stored in Cleaning Log table, linked to the correct block record

Block page auto-updates, most recent cleanup photos surface to neighbors

Trello card structure: block-specific cleanup workflow

Zapier automation: Trello to Airtable connection

Airtable Cleaning Log table: photo records linked to block records and assigned cleaner

Putting it all together: workflow automation sequence for block cleaning

What the infrastructure made possible.

.svg)

Centralized data foundation spanning 1,000+ users and city blocks, consolidated from disconnected platforms into a single, relational source of truth

.svg)

Eliminated fragmented multi-platform workflows, manual data handling across billing, subscriptions, and block onboarding reduced to near zero

.svg)

Grant-funded and neighbor-funded initiatives running through the same architecture, unified reporting and cross-program analytics now possible

.svg)

Real-time cleaning accountability visible to every member without any manual updates from the operations team

.svg)

Scalable backbone in place for future grant applications and product expansion

Key takeaways from building at the intersection of design, data, and operations.

01

Getting stakeholders to agree on how data should be structured — what a "pledge" is, how a "contribution" relates to it, what counts as "funded" — was harder than building the tables themselves. That alignment work wasn't adjacent to product work. It was the product work.

definitions before schemas

02

Fragmented systems don't just slow you down — they make entire categories of product decisions impossible once you scratch the surface. Real-time data, dynamic templating, grant reporting, and automated billing changes were all blocked until the architecture was in place. Solving the data problem unlocked the roadmap.

fix the foundation to unlock features

03

Softr's dynamic capabilities are real, but they're entirely dependent on well-structured, relational data in Airtable. The product quality visible to the end user was a direct reflection of decisions made at the schema level. Design and data aren't sequential, they're co-designed.

schema = design

04

The real-time photo pipeline worked because it was designed around how cleaners actually operate in the field. The constraint of using tools the team already had became a feature: no new training, no adoption curve, just a better-connected version of existing behavior.

meet people where they are

The foundation is in place. The opportunity now is refinement and scale.

01

Deeper exploration of timestamp and geolocation capture within the Trello → Airtable workflow to add a verifiable audit layer to every cleaning record.

02

Targeted research across grant-funded initiatives to identify adoption blockers and assess how the pledge model could evolve toward measurable community endorsement instead of dollars.

03

Leverage the data infrastructure beyond visibility — using transparency as a tool to drive additional pledges, grant alignment, and operational efficiency at scale.

04

Surface existing Airtable data into a richer internal dashboard, giving leadership and stakeholders real-time visibility into program performance without requiring direct database access.